- photo contests ▼

- photoshop contests ▼

- Tutorials ▼

- Social ▼Contact options

- Stats ▼Results and stats

- More ▼

- Help ▼Help and rules

- Login

7 Things You Need to Learn About Particles In Computer Graphics

Particle systems are ubiquitous in computer graphics. They’re used for modeling snow, explosions, sparks, rain, grass, and all sorts of other things that are too hard to model and animate by hand.

A particle system is simply a collection of particles, which are tiny dots that can be made to look like anything, from smoke plumes to soccer balls. Particles are a very important tool in any CG artist’s arsenal, particularly so for animators and effects artists.

They can add realism to a scene or enhance it with other-worldly effects. If you’ve seen a big-budget movie in the past 10 years, odds are at least one explosion or dust storm in it was modeled in a 3D package and included after the scene was shot. Particles make these effects possible and easy.

Renderer Types

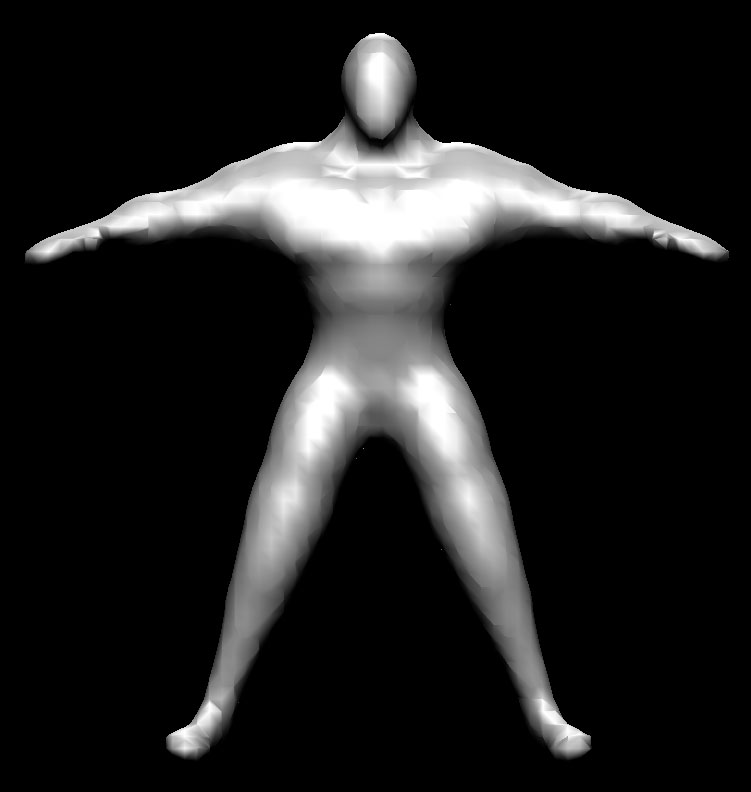

Particle system renderers are divided into 2D renderers and 3D renderers. The advantage of 2D rendering over 3D rendering is that it’s much faster and certain concerns that would be prevalent in a 3D scene simply don’t exist in 2D rendering, such as 2-sided materials, mesh complexity, etc. 2D renderers are primarily implemented as engines, since their speed is more than enough for real-time applications like scientific visualization or gaming, but there are a handful of 2D particle rendering packages, with particleIllusion being the most prominent example. 3D particle renderers are more common and you can usually find an implementation of a particle system in a package with a 3D renderer, such as Maya, 3ds max, Lightwave, or Softimage. In a 3D context, particles are used to imitate real-world phenomena that requires at least some realism (casting proper shadows or being refracted through a scene object). This sort of thing would be rather challenging with a 2D package, even provided a capable compositing application. Thus, sometimes an artist has to make the tradeoff between the speed of use and the ease of 2D renderers and the realism and versatility of 3D renderers.Emitters

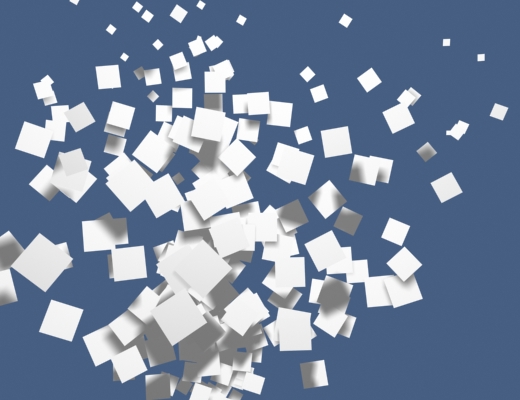

Emitters give birth to particles. You’ll find the terms “birth,” “death,” and “life” to be prominent in particle jargon, since they aptly describe the logical structure of a particle animations. Particles are born from an emitter, they are modified in some way (usually animated), they age and then die. An emitter very often specifies some important initial parameters to particles, such as their motion and their lifespan. The various needs of a CG artist require different types of emitters for different tasks. Generally, the two types of emitters are point emitters and area emitters. Point emitters emit particles from a single point and area emitters emit particles over a specified area. For effects like gun shots or dust trails, point emitters work best, since these types of effects represent a local reaction of an object, such as the firing of a gun or the wheel of a car against a dusty plain. However, point emitters would be quite inadequate for effects like snow storms or waterfalls. This is where area emitters come in. An area emitter emits a particle in every partition that it covers, which is perfect for large areas required for snow storms. What would ordinarily take several point emitters and a lot of modification can easily be done with one area emitter. There are other types of emitters, like 3ds max’s Particle Array, which emits particles from any surface, coming in handy when objects need to be exploded or when dust or other particles need to be lifted off of a surface.Billboard Particles

Billboard particles work best in situations where the “other side” of a particle is unimportant. That is, if a user sees the effect head-on or very far away, where the camera won’t go, it’s much easier to use billboard particles and approximate depth as necessary. Smokestacks, far-away fires, snow, rain, and some varieties of sparks are best done using billboard particles.

Voxels

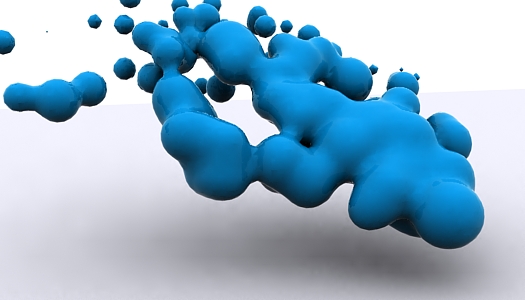

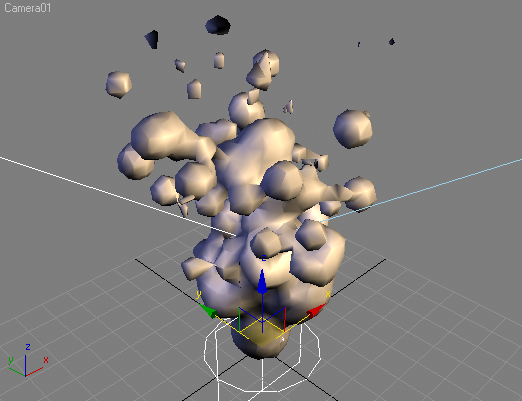

The term “voxel” is a portmanteau of “volumetric pixel.” Like pixels on the screen, where tiny dots are arranged along the vertical and horizontal dimensions, voxels are arranged along an additional third dimension. Think of voxels as blocks stacked together, where one voxel may entirely obscure the voxel behind it. This ability is essential in using voxels in particle systems, where transparency and occlusion between particles is important when modeling certain phenomena. Any effect that requires a high degree of realism, such as clouds, fire, or explosions can be approached with the use of voxels. Packages like Lightwave have voxel rendering built in, but others like 3ds max can be augmented with plug-ins, such as FumeFX. Generally, volumetric particle effects are not included by default in most 3D packages.Metaballs

Particle Age

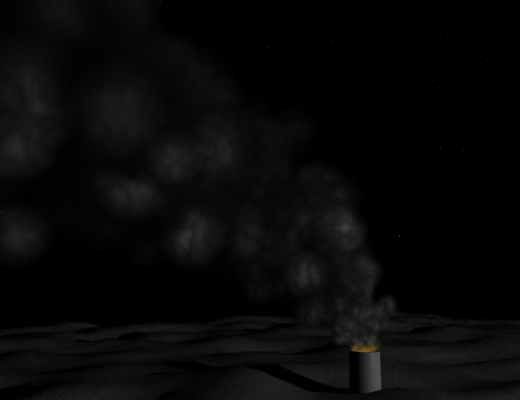

Particle age is an important concept because it addresses what happens to the particle’s appearance as it moves through its lifespan. In real life, a fire has many stages: flames, soot, smoke. Sometimes the smoke is black, sometimes it’s white, sometimes it transitions from one to another due to a substance in the fire. These changes can be modeled using particle age-dependent materials and settings. Emitters usually provide a few age-dependent settings, such as size and speed, but they can’t change whether a particle looks like a flame or like black smoke. That’s where using age-dependent materials comes in. Particles can change not only color, but apparent shape, and they can even be made to look like an animation, such as a man walking. In branding shots and advertisements, where graphics are used for symbolism rather than realism, such versatility can come in very handy.

Particle Effectors

Howdie stranger!

If you want to participate in our photoshop and photography contests, just:

LOGIN HERE or REGISTER FOR FREE

-

says:

-

says:

Nice article, It was very useful for me. 🙂

( 2 years and 4834 days ago ) -

says:

It’s fun to play God!

( 2 years and 4830 days ago ) -

says:

Excellent, straight to the point.

( 2 years and 4830 days ago ) -

says:

Great article! As a beginner I found it useful, and it will surely help me to understand how things work. Thanks!

( 2 years and 4803 days ago ) -

says:

Nice Tutorial to understand the particles.

Greetimgs from de

( 2 years and 4761 days ago ) -

says:

Very informative, only know little bits about particles, further articles like this would be REALLY appreciated! :]

( 2 years and 4760 days ago )

Super article.

( 2 years and 4837 days ago )